Instagram Sharenting Protection Feature - 2023

Mitigating Digital Privacy Risks on Social Media

AI-Integrated Posting Flow Elevated Parental Privacy Awareness and Protected Children's Online Identities

My Role

UX/UI Designer & Researcher

Mixed-Methods Research, Interaction Design, Affinity Mapping, Brainstorming, Information Architecture, Prototyping, Usability Testing

Team

Me (UX/UI Designer)

1 UX Researcher

1 Visual Designer

1 Notetaker

Overview

I co-led the end-to-end design of a social computing feature integrated directly into Instagram's image posting flow.

By utilizing artificial intelligence to proactively detect sensitive child data, we empowered parents to share safely while preserving their children's digital footprint.

Timeline

26 Weeks

✨

HIGHLIGHTS

Transforming how parents share memories by integrating proactive risk detection and intuitive privacy

controls directly into Instagram

✨

CONTEXT

When sharing memories unintentionally

exposes children to long-term risks

A modern struggle between parental

pride and child privacy

In the digital age, parents frequently document their children's milestones on social media a practice known as Sharenting (Sharing + Parenting).

A 2021 study found that 95% of parents with young children share photos online, totaling over 90 million photos daily.

While done to celebrate achievements and connect with family, it often occurs without the child's consent and leaves a lasting, vulnerable digital footprint.

✨

PROBLEM

Is it safe to post this picture of my child?

A well-intentioned practice exposing a massive privacy and safety flaw

A well-intentioned practice exposing a massive privacy and safety flaw What seems like harmless sharing exposes children to severe, long-term risks.

Barclays Bank predicts that by 2030, 7.4 million identity fraud cases annually could be linked to sharenting.

Even worse, Australian e-Safety investigators suggest up to 50% of pedophilic images on websites can be traced back to parents' social media accounts.

Children face risks of:

Identity Theft

Cyberbullying

Reputational Damage

Emotional Distress

✨

THE PROBLEM STATEMENT

How might we develop a social computing product that empowers parents to make informed decisions about

the information they share about their children online,

mitigating risks without entirely limiting

their ability to post memories?

✨

WHY RESEARCH?

Before solutions, we asked better questions

Children prioritize privacy while

parents seek connection

We needed to understand the disconnect between parental intentions and privacy risks, and why current social platforms fail to protect children's digital footprint.

We used a mixed-methods approach to turn assumptions into evidence:

Secondary Research:

Reviewed data on identity fraud and pedophilic image sourcing related to social media.

Quantitative Survey & Interviews:

Gathered responses and conducted interviews with 35 children and 15 parents.

Affinity Mapping:

Synthesized qualitative data to categorize user pain points into distinct themes, such as Parent Knowledge Over Sharenting, Security Concerns, Cyber Bullying, and Identity Thefts.

✨

WHY RESEARCH?

Most parents are cautious but lack the technical

knowledge to identify risks

Understanding where the disconnect happens

Our research revealed that the root of the problem wasn't malicious intent, but a systemic tension between parental connection and child privacy.

Blind Decisions:

Parents share to build relationships and show parental pride, but do not know how to spot hidden metadata or visual risks.

Anxiety with Digital Footprints:

Children expressed deep anxiety over their future reputation and lack of consent. One child noted, "People got to know things about me that I wanted confidential... it made me uncomfortable as people judged me".

✨

RESEARCH TAKEAWAY

These insights shaped our 3 core design principles:

Proactive Risk Detection

Contextual Education

Empowered Parental Control

✨

DESIGN & ITERATION

Rapid Prototyping and Feature Prioritization

Prioritization of Key Features

Working as a team, we conducted extensive brainstorming sessions, generating ideas ranging from age-based defaults to AI photo analysis.

Through collaborative dot-voting and feature prioritization, we evaluated technical feasibility and zeroed in on an AI intervention.

We defined the Information Architecture to ensure the flow felt like a natural extension of Instagram, and prioritized rough sketches and paper prototypes to quickly validate concepts.

AI-Driven Image Analysis:

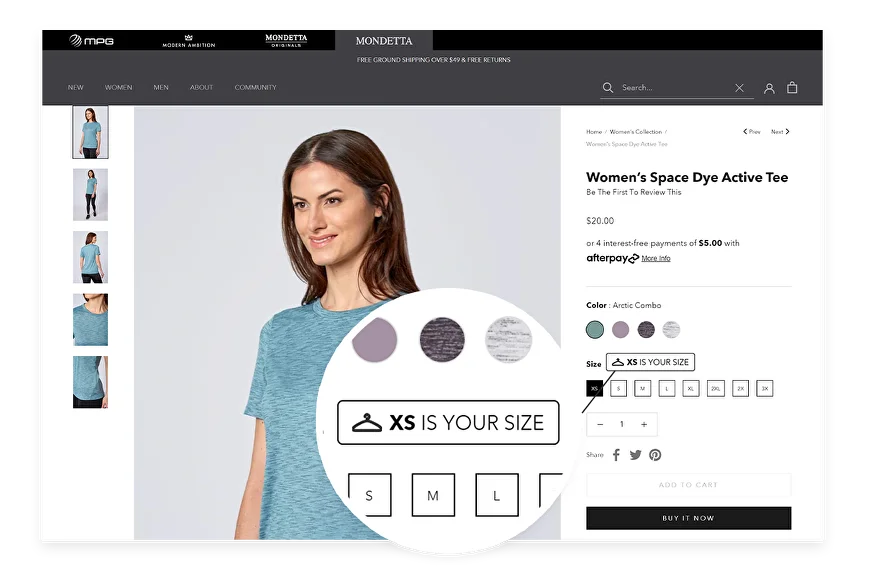

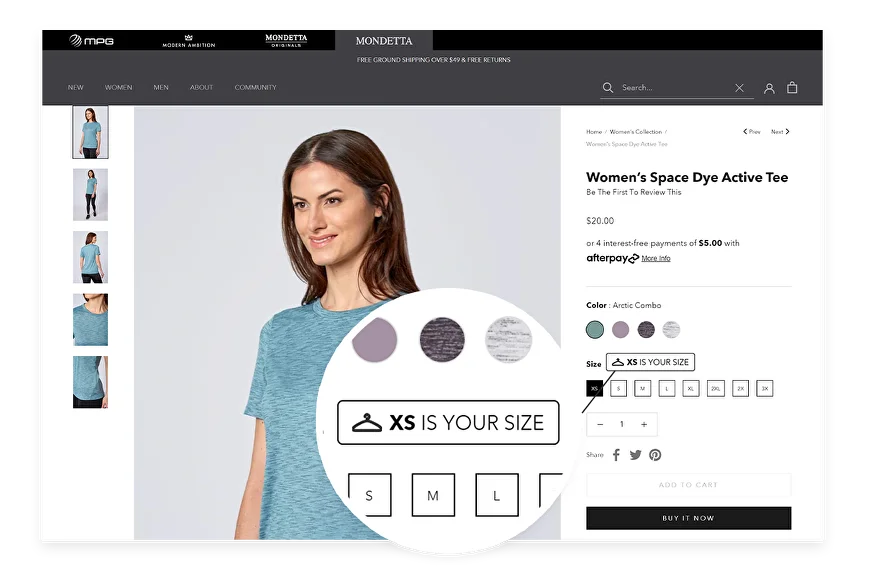

Designed a scanning flow using object recognition to detect faces and locations, offering options to Edit (blur/pixelate), Replace, or Delete.

Contextual Metadata Analysis:

Mapped a flow using NLP to scan captions/geotags, suggesting safer wording or restricting audience visibility to "Close Friends".

Sharental Dashboard:

Grouped privacy insights into a dedicated hub with anti-screenshot toggles.

✨

DESIGN TECHNICAL ROADBLOCK / CHALLENGES

Balancing intervention with user friction

Learning from learnability and trust constraints

During early prototyping of the AI risk detection, we designed generic "Sensitive Info Found" alerts.

However, testing revealed a major roadblock: users struggled with the "learnability" of the feature and lacked trust in the AI's recommendations.

The Pivot on Explanations:

Users needed deeper contextual explanations as to why a specific image was flagged as a risk, rather than just generic alerts. We adapted by planning to provide detailed, educational rationale alongside every system privacy recommendation.

✨

KEY TAKEAWAY

This experience reinforced the critical importance

of building trust through transparency when designing

AI interventions for sensitive user data.

✨

FROM FIRST DRAFT TO FINAL DESIGN

After addressing learnability constraints, we evolved the interface into a validated flow

ENABLE MY PRIVACY PROTECTION SCREEN

AI IMAGE SCANNING & PROGRESS SCREEN

SENSITIVE INFO FOUND ALERTS

EDIT, BLUR, AND REPLACE TOOLS

✨

USABILITY TESTING

Testing the Experience Before Perfecting It

Pilot first, data next, insights always

We conducted rigorous testing using Cognitive Walkthroughs, Expert Evaluations with 10 HCI professionals, and moderated Think-Aloud usability testing with 5 parent participants.

01.

Research & Strategy

In this phase, I dive deep into understanding your business, target audience, and project goals. Through research and strategic planning, I create a clear roadmap to guide the entire design process.

02.

Concept & Ideation

Here, I brainstorm and develop creative concepts that align with your vision. Initial sketches and ideas are refined into tangible wireframes, setting the direction for design and functionality.

03.

Feedback & Refinement

Collaboration is key. I review the design with you, gather feedback, and refine the work to align with your expectations and goals. This ensures the design reflects your vision.

04.

Testing & Optimization

I conduct thorough testing to identify and resolve any performance or usability issues. This phase ensures the design works seamlessly across devices and meets user experience standards.

05.

Launch & Delivery

Once everything is finalized, the project is launched and delivered to you. I also provide guidance or support for ongoing maintenance to ensure long-term success.

✨

USABILITY TESTING

Testing the Experience Before Perfecting It

Pilot first, data next, insights always

We conducted rigorous testing using Cognitive Walkthroughs, Expert Evaluations with 10 HCI professionals, and moderated Think-Aloud usability testing with 5 parent participants.

Quantitative Metrics & Qualitative Feedback:

Users found the interface intuitive and reported that the feature significantly heightened their privacy awareness, successfully impacting how they crafted captions and managed tags. Parents appreciated the tool's potential to support informed decision-making without completely restricting their ability to share.

✨

LEARNINGS FROM USABILITY TESTING

Testing revealed friction points that we immediately documented for future iterations

Observe first, adapt fast and refine

Testing revealed friction points that we immediately documented for future iterations

Hidden Activation Controls

Before:

The toggle button required to activate the Sharenting Protection feature was not prominent enough and was easily missed by users.

After:

Recommended making the opt-in process highly discoverable and developing a comprehensive onboarding flow for first-time users.

Confusing Manual Tools

Before:

Participants found the manual masking, blurring, and pixelation tools confusing to use.

After:

Recommended clearer instructions and expanded editing toolkits including automatic facial recognition redaction.

✨

USABILITY TESTING TURNED GUESSES INTO REAL INSIGHTS

Participants reported the feature significantly

increased their privacy awareness, proving the design

successfully empowered responsible sharing

without limiting parental pride.

✨

LEARNINGS

Reflecting, Learning, and Growing Through Every Project

Learning from privacy and AI design

Transparency Builds Trust:

Users will not adopt AI recommendations blindly; providing detailed, educational rationale is essential for feature learnability and user trust.

Integration over Invention:

By embedding the Sharenting Protection directly into the existing Instagram posting flow, we reduced friction and ensured the intervention met parents where they already were.

Balancing Empathy with Security:

A successful social computing product must respect the parent's desire for connection while firmly protecting the child's right to privacy and a secure digital footprint.

✨

LEARNINGS

Designing for Dual Users

Pilot first, data next, insights always

Listen First:

Conducting heuristic reviews and interviews early set the right direction for the architecture.

Design for Trust:

Transparency, progress indicators, and friendly copy built merchant confidence to use the tool.

Collaborate with Devs Early:

Working closely with engineering during the ideation phase ensured our designs were both scalable and technically feasible.

Back to Top

Hi

Your next UX designer is one email away 😇

I’m currently open for full time roles and collaborations where I can bring user-centered magic to the table ✨

Made with 💖 and 🍵 by Nandini © 2026

Instagram Sharenting Protection Feature - 2023

Mitigating Digital Privacy Risks on Social Media

AI-Integrated Posting Flow Elevated Parental Privacy Awareness and Protected Children's Online Identities

My Role

UX/UI Designer & Researcher

Mixed-Methods Research, Interaction Design, Affinity Mapping, Brainstorming, Information Architecture, Prototyping, Usability Testing

Team

Me (UX/UI Designer)

1 UX Researcher

1 Visual Designer

1 Notetaker

Overview

I co-led the end-to-end design of a social computing feature integrated directly into Instagram's image posting flow.

By utilizing artificial intelligence to proactively detect sensitive child data, we empowered parents to share safely while preserving their children's digital footprint.

Timeline

26 Weeks

✨

HIGHLIGHTS

Transforming how parents share memories by integrating proactive risk detection and intuitive privacy

controls directly into Instagram

✨

CONTEXT

When sharing memories unintentionally

exposes children to long-term risks

A modern struggle between parental

pride and child privacy

In the digital age, parents frequently document their children's milestones on social media a practice known as Sharenting (Sharing + Parenting).

A 2021 study found that 95% of parents with young children share photos online, totaling over 90 million photos daily.

While done to celebrate achievements and connect with family, it often occurs without the child's consent and leaves a lasting, vulnerable digital footprint.

✨

PROBLEM

Is it safe to post this picture of my child?

A well-intentioned practice exposing a massive privacy and safety flaw

A well-intentioned practice exposing a massive privacy and safety flaw What seems like harmless sharing exposes children to severe, long-term risks.

Barclays Bank predicts that by 2030, 7.4 million identity fraud cases annually could be linked to sharenting.

Even worse, Australian e-Safety investigators suggest up to 50% of pedophilic images on websites can be traced back to parents' social media accounts.

Children face risks of:

Identity Theft

Cyberbullying

Reputational Damage

Emotional Distress

✨

THE PROBLEM STATEMENT

How might we develop a social computing product that empowers parents to make informed decisions about

the information they share about their children online,

mitigating risks without entirely limiting

their ability to post memories?

✨

WHY RESEARCH?

Before solutions, we asked better questions

Children prioritize privacy while

parents seek connection

We needed to understand the disconnect between parental intentions and privacy risks, and why current social platforms fail to protect children's digital footprint.

We used a mixed-methods approach to turn assumptions into evidence:

Secondary Research:

Reviewed data on identity fraud and pedophilic image sourcing related to social media.

Quantitative Survey & Interviews:

Gathered responses and conducted interviews with 35 children and 15 parents.

Affinity Mapping:

Synthesized qualitative data to categorize user pain points into distinct themes, such as Parent Knowledge Over Sharenting, Security Concerns, Cyber Bullying, and Identity Thefts.

✨

WHY RESEARCH?

Most parents are cautious but lack the technical

knowledge to identify risks

Understanding where the disconnect happens

Our research revealed that the root of the problem wasn't malicious intent, but a systemic tension between parental connection and child privacy.

Blind Decisions:

Parents share to build relationships and show parental pride, but do not know how to spot hidden metadata or visual risks.

Anxiety with Digital Footprints:

Children expressed deep anxiety over their future reputation and lack of consent. One child noted, "People got to know things about me that I wanted confidential... it made me uncomfortable as people judged me".

✨

RESEARCH TAKEAWAY

These insights shaped our 3 core design principles:

Proactive Risk Detection

Contextual Education

Empowered Parental Control

✨

DESIGN & ITERATION

Rapid Prototyping and Feature Prioritization

Prioritization of Key Features

Working as a team, we conducted extensive brainstorming sessions, generating ideas ranging from age-based defaults to AI photo analysis.

Through collaborative dot-voting and feature prioritization, we evaluated technical feasibility and zeroed in on an AI intervention.

We defined the Information Architecture to ensure the flow felt like a natural extension of Instagram, and prioritized rough sketches and paper prototypes to quickly validate concepts.

AI-Driven Image Analysis:

Designed a scanning flow using object recognition to detect faces and locations, offering options to Edit (blur/pixelate), Replace, or Delete.

Contextual Metadata Analysis:

Mapped a flow using NLP to scan captions/geotags, suggesting safer wording or restricting audience visibility to "Close Friends".

Sharental Dashboard:

Grouped privacy insights into a dedicated hub with anti-screenshot toggles.

✨

DESIGN TECHNICAL ROADBLOCK / CHALLENGES

Balancing intervention with user friction

Learning from learnability and trust constraints

During early prototyping of the AI risk detection, we designed generic "Sensitive Info Found" alerts.

However, testing revealed a major roadblock: users struggled with the "learnability" of the feature and lacked trust in the AI's recommendations.

The Pivot on Explanations:

Users needed deeper contextual explanations as to why a specific image was flagged as a risk, rather than just generic alerts. We adapted by planning to provide detailed, educational rationale alongside every system privacy recommendation.

✨

KEY TAKEAWAY

This experience reinforced the critical importance

of building trust through transparency when designing

AI interventions for sensitive user data.

✨

FROM FIRST DRAFT TO FINAL DESIGN

After addressing learnability constraints, we evolved the interface into a validated flow

ENABLE MY PRIVACY PROTECTION SCREEN

AI IMAGE SCANNING & PROGRESS SCREEN

SENSITIVE INFO FOUND ALERTS

EDIT, BLUR, AND REPLACE TOOLS

✨

USABILITY TESTING

Testing the Experience Before Perfecting It

Pilot first, data next, insights always

We conducted rigorous testing using Cognitive Walkthroughs, Expert Evaluations with 10 HCI professionals, and moderated Think-Aloud usability testing with 5 parent participants.

01.

Research & Strategy

In this phase, I dive deep into understanding your business, target audience, and project goals. Through research and strategic planning, I create a clear roadmap to guide the entire design process.

02.

Concept & Ideation

Here, I brainstorm and develop creative concepts that align with your vision. Initial sketches and ideas are refined into tangible wireframes, setting the direction for design and functionality.

03.

Feedback & Refinement

Collaboration is key. I review the design with you, gather feedback, and refine the work to align with your expectations and goals. This ensures the design reflects your vision.

04.

Testing & Optimization

I conduct thorough testing to identify and resolve any performance or usability issues. This phase ensures the design works seamlessly across devices and meets user experience standards.

05.

Launch & Delivery

Once everything is finalized, the project is launched and delivered to you. I also provide guidance or support for ongoing maintenance to ensure long-term success.

✨

USABILITY TESTING

Testing the Experience Before Perfecting It

Pilot first, data next, insights always

We conducted rigorous testing using Cognitive Walkthroughs, Expert Evaluations with 10 HCI professionals, and moderated Think-Aloud usability testing with 5 parent participants.

Quantitative Metrics & Qualitative Feedback:

Users found the interface intuitive and reported that the feature significantly heightened their privacy awareness, successfully impacting how they crafted captions and managed tags. Parents appreciated the tool's potential to support informed decision-making without completely restricting their ability to share.

✨

LEARNINGS FROM USABILITY TESTING

Testing revealed friction points that we immediately documented for future iterations

Observe first, adapt fast and refine

Testing revealed friction points that we immediately documented for future iterations

Hidden Activation Controls

Before:

The toggle button required to activate the Sharenting Protection feature was not prominent enough and was easily missed by users.

After:

Recommended making the opt-in process highly discoverable and developing a comprehensive onboarding flow for first-time users.

Confusing Manual Tools

Before:

Participants found the manual masking, blurring, and pixelation tools confusing to use.

After:

Recommended clearer instructions and expanded editing toolkits including automatic facial recognition redaction.

✨

USABILITY TESTING TURNED GUESSES INTO REAL INSIGHTS

Participants reported the feature significantly

increased their privacy awareness, proving the design

successfully empowered responsible sharing

without limiting parental pride.

✨

LEARNINGS

Reflecting, Learning, and Growing Through Every Project

Learning from privacy and AI design

Transparency Builds Trust:

Users will not adopt AI recommendations blindly; providing detailed, educational rationale is essential for feature learnability and user trust.

Integration over Invention:

By embedding the Sharenting Protection directly into the existing Instagram posting flow, we reduced friction and ensured the intervention met parents where they already were.

Balancing Empathy with Security:

A successful social computing product must respect the parent's desire for connection while firmly protecting the child's right to privacy and a secure digital footprint.

✨

LEARNINGS

Designing for Dual Users

Pilot first, data next, insights always

Listen First:

Conducting heuristic reviews and interviews early set the right direction for the architecture.

Design for Trust:

Transparency, progress indicators, and friendly copy built merchant confidence to use the tool.

Collaborate with Devs Early:

Working closely with engineering during the ideation phase ensured our designs were both scalable and technically feasible.

Back to Top

Hi

Your next UX designer is one email away 😇

I’m currently open for full time roles and collaborations where I can bring user-centered magic to the table ✨

Made with 💖 and 🍵 by Nandini © 2026